The burden of brain disease in Europe continues to rise. Currently, more than one in three individuals in Europe suffers from a disorder of the brain.1 Brain diseases carry with them an estimated yearly economic burden of 789 billion Euro,2 placing a considerable burden on personal, social, and economic well-being. Globally, mental disorders account for a disproportionately large percentage of disability-adjusted life years (56.7%) followed by neurological disorders (28.6%),3 illustrating the deleterious effects these disorders place on the individual and society. Efforts to reduce this burden have led to a multi-disciplinary collaboration between the neurosciences, sociologists, clinicians, and public health officials, with a particular focus on the interplay between genetics and the environment, often referred to as “nature versus nurture”, in the development of these diseases.

Gaining a better understanding of the causal risk factors associated with psychiatric and neurological disease may aid in efforts to prevent the development of these often disabling conditions and subsequently reduce this burden overall.

Dr. Andreas Meyer-Lindenberg addresses the need and potential approach one could undertake to better understand and potentially prevent the development of these disorders

Genetics and the Environment: Disease Development and Course

Although it is possible that a small number of genes might directly influence the pathogenesis of disease, it is more likely that the relevant genes influence a range of genetically influenced intermediate characteristics that subsequently affect the risk for the development of a disorder.7

The interplay between genetics (nature) and the environment (nurture) in the development and course of a disease has long been studied, however, it has not been until recent history that a comprehensive genomic analysis has been feasible from a financial perspective to perform. While the cost of performing a whole genome sequence (WGS) was over $3 billion USD 13 years ago, it can now be accomplished in under two weeks for less than $1,000 USD.4 WGS may help both researchers and clinicians to understand the complex molecular systems that impact health, disease, and drug response and subsequently function as a key driver of psychiatric neuroscience.5

While genomics may lead to a better understanding of the heritability of some disease states, the degree to which one’s genetic disposition and one’s environment influences the development of psychiatric and neurological disorders varies across individuals, disorders, and a number of other facets.6 The development of psychiatric disease is dependent on the degree to which a range of genetic, environmental and psychological risk factors interact in any given individual.6,7,8,9 In general, for individuals with low genetic risk of developing a disorder, a high level of exposure to environmental risks is required to trigger the development of the disease whereas for individuals with a high degree of genetic susceptibility to the development of disease, a lower level of exposure to environmental risks may be required to trigger the onset of disease.

Deciphering precisely how, and to what degree, genetic and environmental factors influence brain function and disorder development is difficult, largely as a consequence of the vast genetic heterogeneity (variation) between individuals, but also as a result of difficulties in experimentally controlling for variation in environmental and psychological influences.10 In psychiatric disorders, the contribution of heritable factors depends greatly on the disorder itself and may be related to the severity of the illness. In schizophrenia and bipolar disorder, for example, genetics play a large role in the development of disease with twin studies illustrating their heritability at 70-80%. In depression, the severity of depression may indicate the degree of genetic influence as well, with studies demonstrating the genetic impact in the general population at 38% compared to up to 75% for hospitalized depressed patients.11 Within neurological diseases such as dementia, studies indicated that currently, 1 in 4 individuals aged ≥55 has a family history of dementia. For these individuals, the risk of developing dementia is 20% compared to 10% in the general population, illustrating the heritability of disease.12 From these data, it is evident that genetics plays a role in the development of a disease but is clearly impacted by outside factors, including one’s environment.

Understanding the interplay of genetics and environment has yet to have a substantial impact on treatments for psychiatric disorders

Three key environmental risk factors have been identified which have been found to carry a causal relationship to psychiatric disease: urbanicity (living in or being raised in a city), social factors, and migration.

These endophenotypes are likely to reflect the actions of multiple genes and to relate to both genetic and environmental influences. As such, the risk of developing a psychiatric or neurological disorder is influenced by the way in which an individual’s genetic profile interacts with a particular set of risk factors.9

Psychiatric Disease

Environmental risk factors

Is it possible to identify environmental risk factors that have a causal relationship to the development of psychiatric disease or have an impact on brain function? After all, the identification of such risk factors would likely aid in efforts to prevent the onset of these disorders. While traditional risk factors such as stress, smoking, alcohol use, and diet influence the health and well-being of an individual and increase the risk of experiencing poor health, recent research suggests that other environmental risk factors are correlated with the development and maintenance of select psychiatric disorders, including schizophrenia.

How do behavioral and environmental risk factors for life expectancy differ from those associated with psychiatric disease? Such a question has, until recently, remained relatively unexplored. In recent years, however, three key environmental risk factors have been identified which have been found to carry a causal relationship to psychiatric disease: urbanicity (living in or being raised in a city), social factors, and migration. These risk factors, independently and co-occurring, have been shown to carry an increased risk of psychiatric disorders and altered brain function, each of which this article will explore further.

Urbanicity

Those who are born and spend their first year of life in a city are two to three times more likely to develop schizophrenia than those born in rural environments.17,18,19

Cities are catalysts of growth and opportunity and understanding the impact of cities on human life is of vital importance given the rapid migration of people to cities throughout the world. In 2014, 54% of the world’s population lived in cities. By 2050, that number is expected to grow to include 66% of the world’s population, meaning that two out of every three individuals will live in an urban environment.13 There are many positive aspects of living in a city and certainly good reasons for moving from a rural home to a city environment. In general and from public health perspective, individuals who live in cities have seen a greater increase in their life expectancy over the past 40 years than those in rural areas due to lower rates of heart disease, diabetes, lung cancer, stroke, and suicide.14 All of this is clearly positive.

While the statistics regarding physical health conditions are encouraging for those living in urban environments, the situation in regard to mental health problems for those born or living in cities is less positive. To the contrary, rural residents have been shown to have lower rates of chronic mental health problems, including depressive and anxiety disorders, compared to their urban counterparts.15,16 Further, a perplexing and troubling statistic exists in regard to the mental health status of urbanites: those who are born and spend their first year of life in a city are two to three times more likely to develop schizophrenia than those born in rural environments.17,18,19

While the available evidence suggests that the environmental risk associated with living in a city and developing schizophrenia is conditional upon genetic factors,20 parsing out which risk factors are associated with living in an urban environment and the development of mental disorders is important and has proven to be more difficult. Given the rapid expansion of cities globally, achieving an understanding of the neural processes by which these associations occur may help to temper and prevent their onset.

Living in a city can have an impact on mental health

Through the use of the neurosciences, researchers have sought to answer some of these questions through the identification of neural processing differences in urban versus rural dwellers. A relatively recent initiative has focused on the identification of factors correlated with higher levels of stress in those born and living in cities. In order to examine neural processing in urbanites,

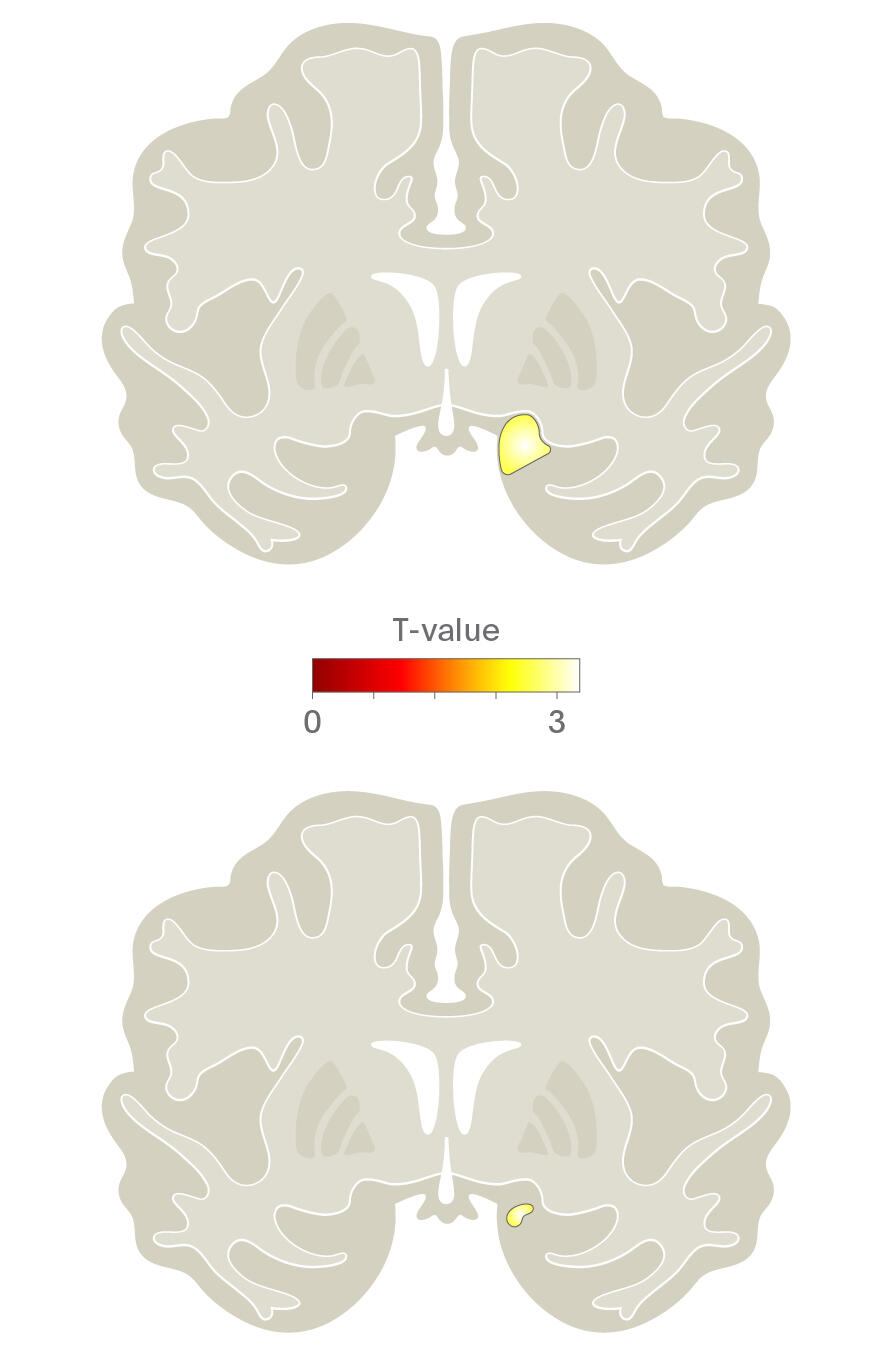

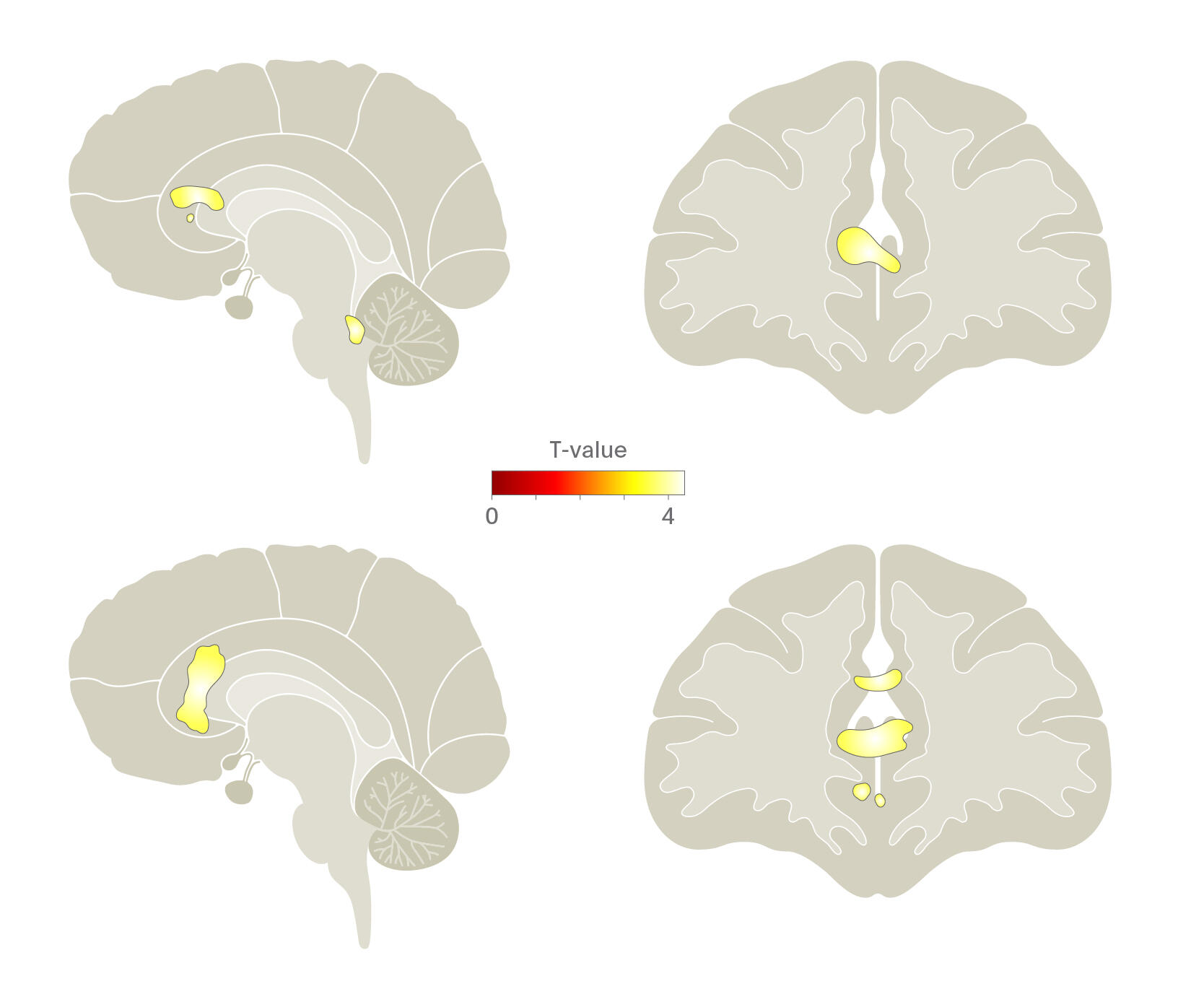

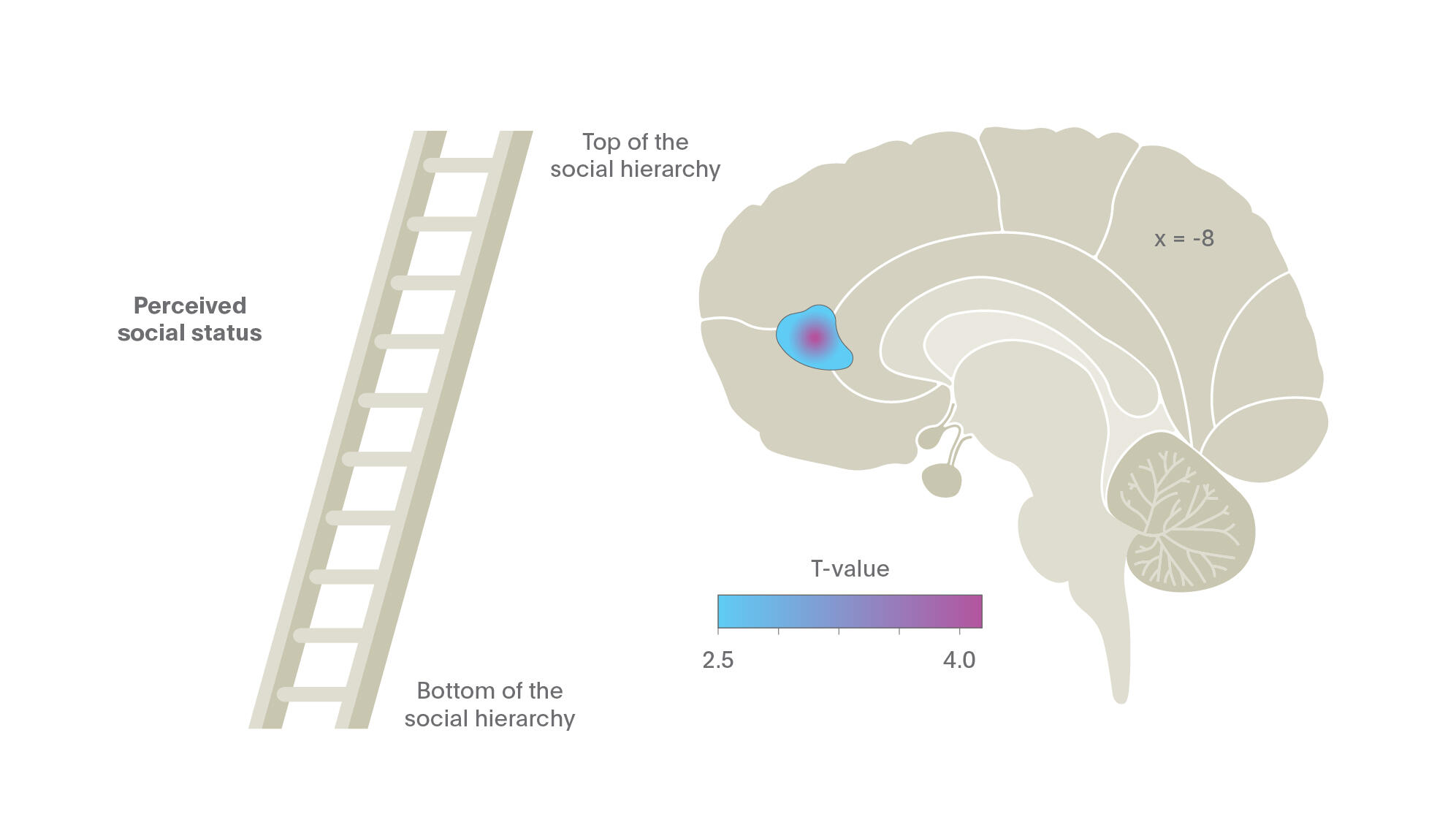

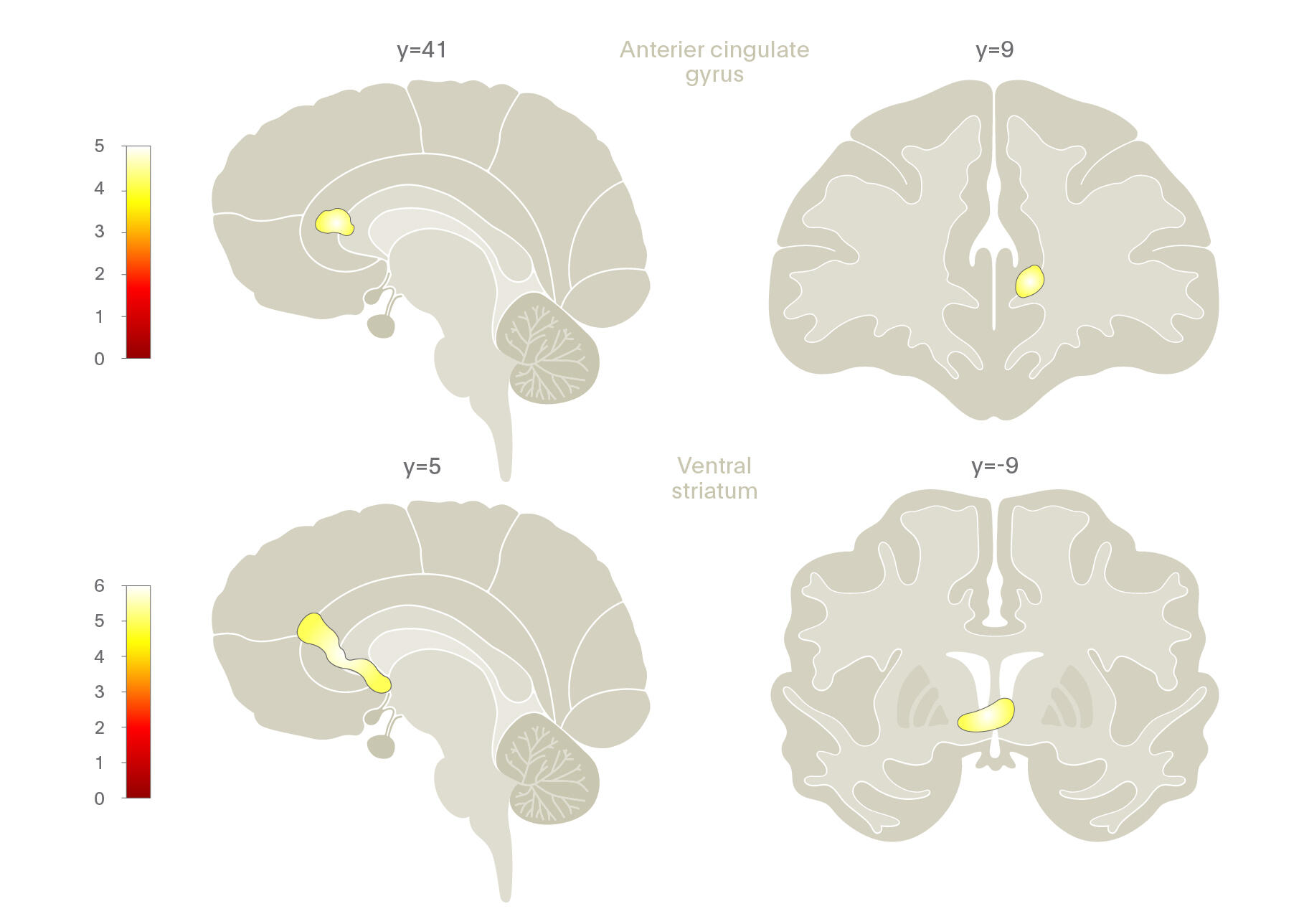

Lederbogen and colleagues (2011)21 used functional neuroimaging to examine cognitive stress processing differences between urban and rural inhabitants. They found that living in the city was associated with increased amygdala activity while growing up in an urban environment had a significant impact on activity in the perigenual anterior cingulate cortex (pACC), a region important in amygdala activity regulation, stress, and negative affect processing.

In other words, the way in which humans process social, evaluative stress differs between those who live or are raised in an urban environment.

In addition to functional differences, structural differences in the brain have also been found in city dwellers. A study of 110 healthy subjects who underwent structural neuroimaging found inverse correlations between being born or raised at an early age in an urban environment and grey matter volume in the right dorsolateral prefrontal cortex (DLPFC) and pACC in men.22 These findings are particularly interesting given that previous research has identified them as being associated with early life stress and, in later life, with the development of schizophrenia.

It appears that living in or spending early years of life in the city has a profound impact on both structure and function of parts of the brain associated with stress and emotion processing. It begs the question, however, of what specifically may cause such functional and structure abnormalities in the brains of those who experience early life in an urban environment?

Social Factors and Migration

A variety of social risk factors have been studied in relation to their potential association with mental illness and neural processing including socioeconomic status (SES), social perception, and social relationships.23

A variety of social risk factors have been studied in relation to their potential association with mental illness and neural processing including socioeconomic status (SES), social perception, and social relationships.23 Social perception, or the process by which humans form impressions of other people and make inferences about them, and social relationships, or feeling of being connected to those around oneself, are two such areas that has come into focus, especially in research examining the impact of the urban environment on health. Recent research into these areas and their association with health and brain function have shed light on interesting differences in neuronal activity, mortality rates, and the risk of developing mental health disorders.

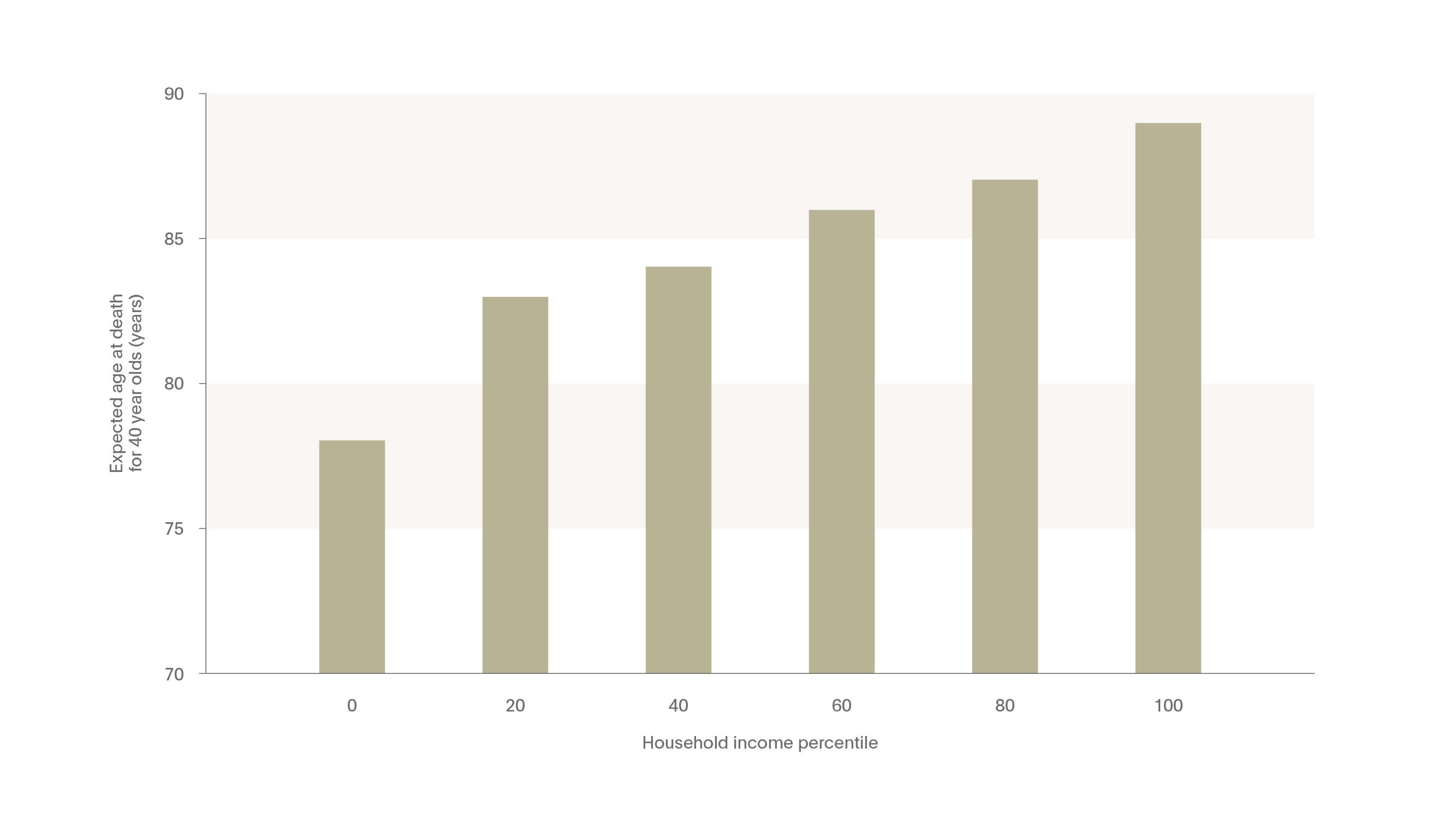

The research conducted prior to recent years largely focused on environmental risk factors associated with life expectancy. Understanding which risk factors are associated with living longer has aided researchers and clinicians in targeting specific behaviors (such as smoking, diet, and exercise) and environmental factors (such as stress or socioeconomic status) to reduce mortality rates. In a recent study in the Journal of the American Medical Association (JAMA), for example, researchers illustrated the correlation between wealth and life expectancy.24 The study, conducted using income and mortality data in the United States from 1999-2014, found a direct, nearly linear correlation between median household income and life expectancy: the more money one has, the longer one lives. In fact, as can be seen in the Figure bellow, those in the top 1% of household income were expected to live 10 years longer for women and nearly 15 years for men compared to those in the bottom 1% household income bracket.

Interestingly, for those in the bottom income quartile, who on average had the lowest life expectancy rates of all income groups, significant correlations were also noted between life expectancy and smoking, obesity, being an immigrant, income segregation, and population density.

While the Chetty et al. (2016) illustrates the impact of wealth on life expectancy, other social factors have been shown to correlate with health, well-being, and neuronal activity. How one perceives their social status, for example, has recently been shown to have an impact on brain function. One such study performed fMRI on 100 healthy men and women, illustrating that those who perceived themselves as having a low social status compared to their peers had significantly reduced gray matter volume in the pACC, even after controlling for demographic and SES.25

The results of this revolutionary paper are explained by Dr. Meyer-Lindenberg in the following audio clip

Similar results have also been shown in immigrant or migrant populations as well. As social integration is an important facet of feeling connected to one’s community and surroundings, many migrant populations often feel disconnected to their surroundings and migration itself has been shown to be connected with increased rates of mental illness.26 Migration has also been noted as a risk factor in altered brain function in a number of studies, especially in relation to how the brain processes social status and stress.

Dr. Meyer-Lindenberg discusses migration as a risk factor in psychiatric disease

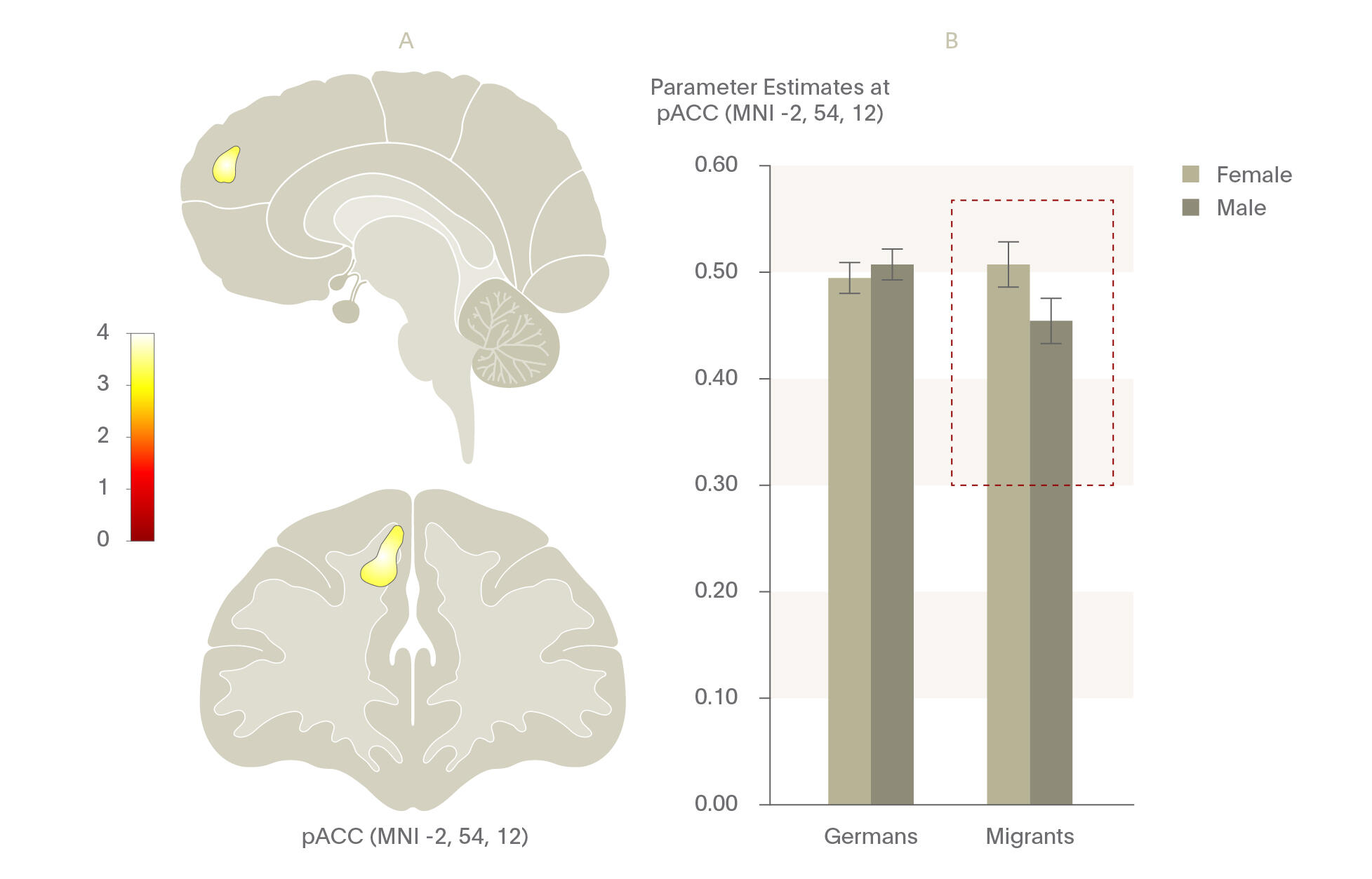

One study examined men with a migration background in Germany compared to a sample of German nationals using a stress processing functional neuroimaging task.27 Similar to results shown in fMRI tasks in those who grew up in cities, they found that those with a migration background demonstrated significant increases in pACC functional connectivity when under social stress and when they perceived themselves as being discriminated against compared with German nationals during stress processing. Further, significant gender differences have been noted in male migrants versus female migrants as compared to males and females of German origin. A recent, unpublished study found by the same group that male migrants had significantly smaller pACC volumes compared to females, illustrating possible cumulative risk effects for male migrants. Taking together, these studies illustrate the public health importance of addressing social perception, especially in migrant populations.

Similar to results shown in fMRI tasks in those who grew up in cities, they found that those with a migration background demonstrated significant increases in pACC functional connectivity when under social stress and when they perceived themselves as being discriminated against compared with German nationals during stress processing.

In a meta-analysis of over 300,000 individuals, examining the impact of social relationships on mortality, researchers found that the only two factors for decreased mortality were based on having quality social relationships – both one’s feeling of having solid support from others and the feeling of being socially integrated.29

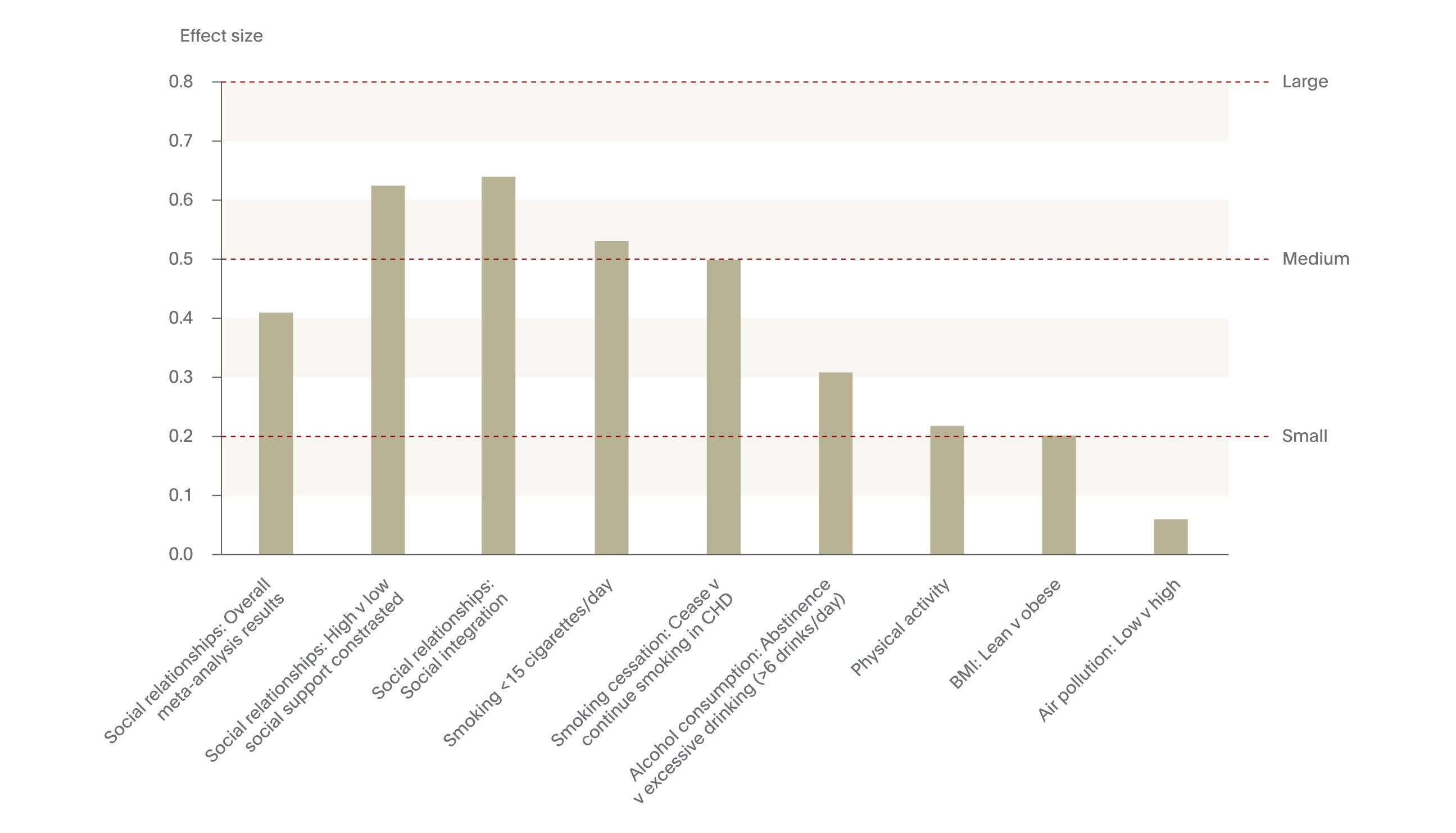

While social perception is indeed important, the precise impact of developing and maintaining quality social relationships within migrant and nationalized populations also requires focus. Recent research suggests that social relationships developed and maintained both in the real world and online have a significant impact on brain function and life expectancy. One study illustrated that the size of one’s real and online social network was positive correlated with grey matter density in the amygdala,28 illustrating positive social cognitive processing with greater social connectivity. Such social relationship building and integration also has an impact on mortality. In a meta-analysis of 148 studies, including over 300,000 individuals, examining the impact of social relationships on mortality, researchers found that the only two factors with an effect size in the medium to large range for decreased mortality were based on having quality social relationships – both one’s feeling of having solid support from others and the feeling of being socially integrated.29 These effect sizes were higher than other, well-established and prototypical public health targets risk factors, such as smoking cessation, alcohol consumption, physical activity, and body mass index, illustrating the importance of social relationships in living longer.

Conclusions

The importance of identifying and intervening in modifiable, environmental risk factors to delay or prevent the onset of psychiatric disease cannot be emphasized enough. The recent body of research, illustrating disparities between income levels and health, functional and structural brain differences in urbanites, and the social perception differences in migrants all point to challenges which require attention in various facets of society. With an increasingly integrated and mobile population worldwide and with rapidly expanding cities, further research and focus is required in order to address these public health challenges in order to improve the mental health of our global population.

Neurological Disease

Just as in psychiatric disorders, a number of environmental factors play a role in neurological health although the “environment” as it is discussed within neurology may be better defined through a broader lens. Societal, scientific, and ethical environments all play key roles in shaping the environment for those with neurological disease. In recent years, for example, global warming has imparted effects on vectors if diseases with neurological manifestations, including Lyme disease and the Zika virus. While these diseases certainly pose public health and clinical burdens, one of the greatest public health challenges surrounding neurological disease worldwide stems from a rapidly aging global population. The UN estimates that the number of 80- to 89-year-olds will increase 5-fold from 2000 to 2050 worldwide.30 For most nations, regardless of their geographic location or developmental stage, the ≥80-year-old age group is growing faster than other segment of the population.

By 2050, the increase in the aging population of the world will cause the global prevalence of AD to quadruple, meaning that 1 in 85 individuals will be living with AD.32

As a result of people living longer, a substantial increase in the proportion of the population with sequelae of diseases and injuries is also increasing.31 This is particularly true in Alzheimer’s Disease (AD), which is predicted to increase dramatically over the coming 30 years. By 2050, the increase in the aging population of the world will cause the global prevalence of AD to quadruple, meaning that 1 in 85 individuals will be living with AD.32 In the US, nearly 3 million people ≥85 years of age are projected to have AD in 2030.33 While dementia is recognized as a critical public health problem, the World Health Organization (WHO) has noted that over half of all dementia cases may go unrecognized in primary care practices worldwide.34 Since the neurobiological damage associated with the onset of AD are presumed to be irreversible, early disease identification is critical for optimal patient management.35

In order to intervene early, strategies targeting the prevention of dementias and other neurological diseases have become a priority of global health organizations, including the WHO. Identifying modifiable risk factors in the development of dementias could help to reduce the global burden associated with AD.36

Dr. Serge Gautier discusses some of the modifiable risk factors associated with neurological diseases such as dementia

Studies have suggested that nearly one-third of AD cases may be preventable based on modifiable risk factors.36,37

Studies have suggested that nearly one-third of AD cases may be preventable based on modifiable risk factors.36,37 Using meta-analytic methods, Norton and colleagues (2014) identified seven modifiable risk factors commonly associated with AD: diabetes, midlife hypertension, midlife obesity, physical inactivity, depression, smoking, and low educational attainment. They estimated that by reducing the prevalence of each of these risk factors by 10% to 20% each decade, the worldwide prevalence of AD would decrease by as much as 15% by the year 2050.

Genetics and Biomarkers

With advances in the aging population and prevalence of AD worldwide have also come innovations in genetic testing and the identification of biomarkers, resulting in earlier diagnostic opportunities and the potential to genetically-predict treatment response for many neurologic diseases.38 The use of genetic and clinical biomarkers has become an increasingly important tool in diagnosing AD with high specificity and sensitivity.39 AD is characterized by both extracellular Aβ plaque depositions and intraneuronal inclusions of the microtubule-associated protein tau (total tau and phospho-tau-181), each of which are used to indicate probable AD and can be identified with testing.40 The identification of these markers allows clinicians to treat patients at an earlier stage of the disease, potentially delaying the progressing AD for a longer period of time and increasing the quality of life for those individuals and their family members.

While the clinical utility of biomarkers for those exhibiting symptoms of AD is clear, the use of biomarker testing to identify individuals who may be at risk of developing AD but have yet to exhibit symptoms raises numerous ethical questions.

Ethical considerations

While the clinical utility of biomarkers is clear for those exhibiting symptoms of AD is clear, the use of biomarker testing to identify individuals who may be at risk of developing AD but have yet to exhibit symptoms raises numerous ethical questions. While early diagnosis of AD is imperative in order to provide quality patient care,35 informing an individual that they have markers of a disease yet no symptoms can result in undue harm to the individual, increasing stress, anxiety, and depressive symptoms. Further, and from a clinical standpoint, the predictive value of biomarker test results to the development of clinical symptoms is unknown.41 It is entirely feasible that an individual testing positive for biomarkers associated with AD may never develop AD in the future.42

Clinical considerations should also be taken into account. At this time, the clinical utility of providing an asymptomatic diagnosis of AD is extremely limited since no disease-modifying treatments currently exist. Further, the impact that such biomarker tests may have on health insurance availability, in the workplace, and practical activities such as driving has yet to be adequately vetted.

Given these concerns, how clinicians employ the use of biomarker testing in AD is a topic in need of urgent attention. A recent survey of researchers in Alzheimer's Disease Neuroimaging Initiative (ADNI) examined whether results of amyloid testing should be returned to cognitively asymptomatic participants in the study, mimicking a clinical environment. The study illustrated that the ADNI researchers supported a tempered disclosure of amyloid imaging results to participants but that guidance on the process of how and when to disclosure biomarker is needed.43

Conclusions

With a rapidly aging population worldwide come significant neurological challenges, many of which have already begun to manifest themselves in terms of prevalence and cost. Alzheimer’s disease (AD) is one such condition, which is projected to increase four-fold in the coming 35 years. While genetic and biomarker testing has allowed for advanced screening for this and other neurological diseases, further efforts are needed in order to reduce the overall burden of AD on the individual, their families, and society. Addressing the often complex ethical concerns associated with pre- or early-symptomatic screening in order to facilitate quality care yet limit the burden to the patient must be undertaken in concert with further advancements in health care screening and treatment.

Overall Conclusions

Gaining a better understanding of the interplay between genetics and the environment can aid in reducing the overall burden of psychiatric and neurologic disease worldwide. The exciting work conducted in recent years in both psychiatric and neurologic disciplines has aided in better understanding how heritable and environmental factors interact to either worsen or improve our quality of life. Neuroscientists, in collaboration with sociologists and public health officials, have begun to shed light on the way in which the environment impacts brain function and, in some cases, structure. Due to advances in genetic testing, healthcare officials and researchers have enhanced their ability to identify those at risk for developing an illness early, at times even before symptoms manifest. While pre-symptomatic testing raises the potential for ethical concerns, the field of psychiatry and neurology are advancing the right direction and with the best of intentions for the patient and society. The future of the neurosciences in affecting meaningful change in psychiatry and neurology is strong yet requires collaborative efforts with a multi-disciplinary range of researchers, clinicians, patients, and advocates in order to improve the quality of life for the millions of people who suffer from psychiatric and neurologic diseases.